If you have been paying any attention to AI over the last three years, you have probably been told - repeatedly - that what matters most is the model.

Which company has the biggest 'brain'.

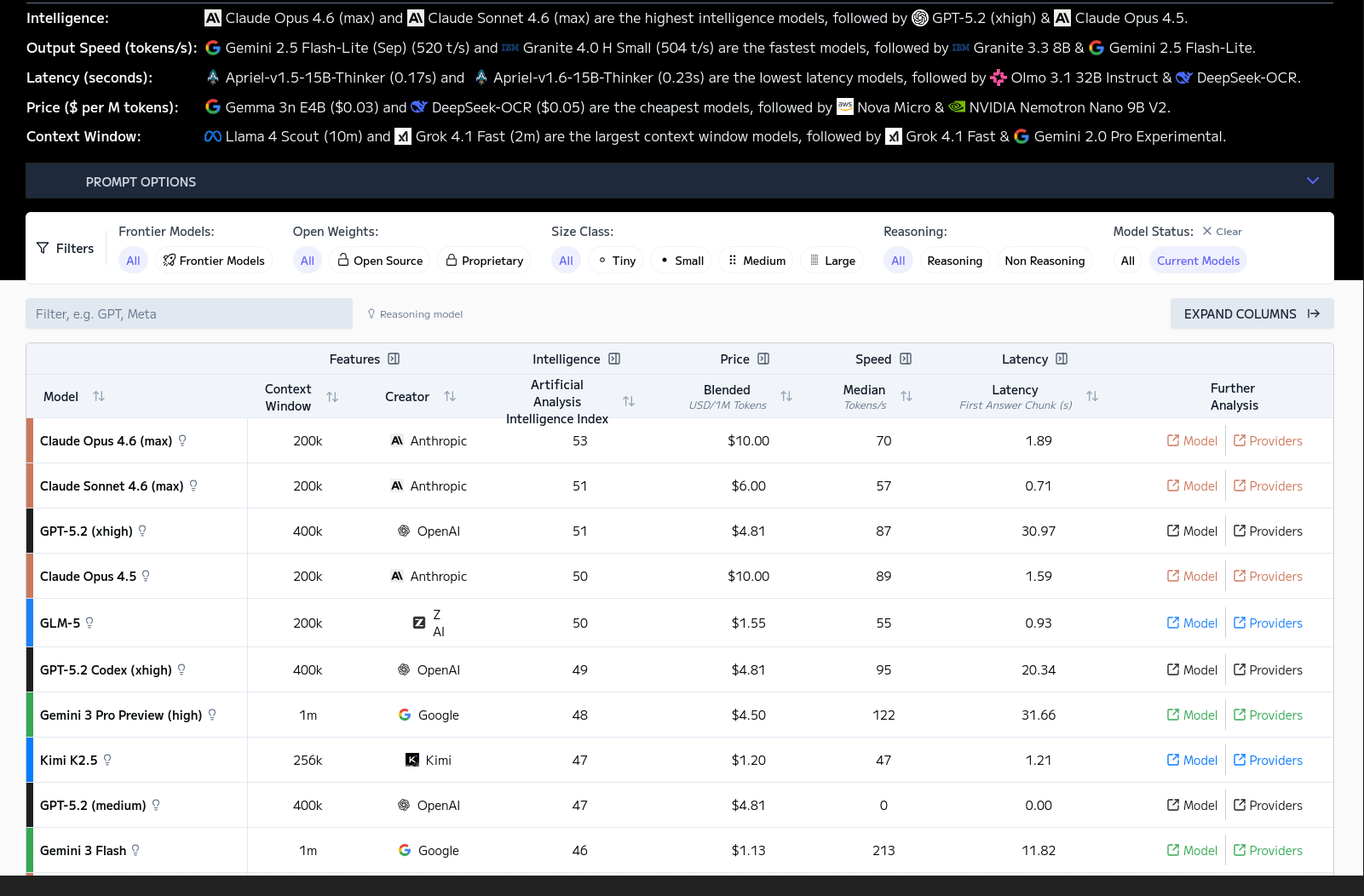

Which benchmark score is highest.

Which release is going to "change everything."

I get it. I have spent the last two years helping business leaders make sense of AI, and most of the time, the model really is the story.

Every few months, OpenAI, Google, or Anthropic would release something new, and we would all scramble to figure out what it meant. I am on that treadmill too.

But in early 2026, if you are still focused on which AI is smartest, you may be watching the wrong race.

Whether you are using the latest ChatGPT, Claude, or Gemini, the raw intelligence is practically indistinguishable for most professional work.

They can all code, write, and analyse data to a standard that exceeds most of us. There are still differences at the edges - complex multilingual reasoning, specialised domains - but for the work that fills your average weekday, the gap has closed.

So if the brains are all comparable, why does one AI feel like a confused intern while another feels like a capable colleague?

The answer is infrastructure. We are no longer competing on the model. We are competing on the harness.

As it happens, the Chinese New Year that began on 17th February marks the Year of the Horse - the Fire Horse, specifically, symbolising energy, momentum, and forward motion.

A horse without a harness is powerful but may not take you where you want to go.

A harness without a horse is useless.

The timing feels apt for 2026.

What an AI Harness Actually Is

If you want to understand why - for many, many people - AI suddenly started feeling like being capable of real work in early 2026, we need to look at the difference between the harness and the model.

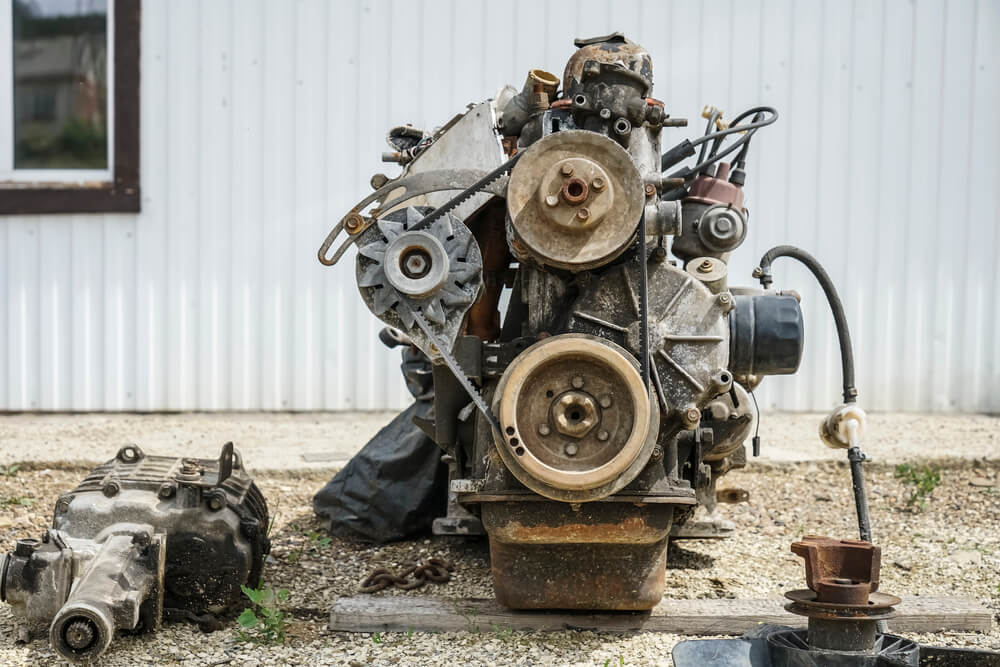

I like to use car analogy for a while now when I talk about AI models and applications, and it works here (like most analogies it's imperfect, but still useful).

The AI Language Model is the engine.

GPT-5.2, Claude 4.6, Gemini 3 Pro - these are the raw, underlying brains. Language powerhouses, incredibly good at writing and now quite good at emulating reasoning.

But an engine sitting on the floor of a garage won't take you anywhere.

Doesn't matter how powerful this engine is, it won't take you anywhere right now.

Enormous capability, no way to interact with the world.

The App is the dashboard and steering wheel. ChatGPT.com, Gemini Workspace - these are the user interfaces the companies build so that you can talk to the engine. (Some people call it the "product", and it's usually owned by the "VP of Product" role).

This is the interface that makes the power accessible and useful.

The Harness is the transmission and wheels and body. Claude Cowork, OpenAI Operator, OpenClaw - this is the layer that sits between the App and the Model. If the App is how you talk to the AI, the Harness is how the AI talks to the world.

That distinction matters enormously.

Back in 2023 and 2024, the industry dismissed anything built on top of a model as a "wrapper" - a thin, disposable layer of interface that added no real value. And that criticism was fair when all you were doing was putting a chat box in front of an API. But the Harness is not a wrapper. A wrapper is stateless - it forgets everything the moment you close the tab. A Harness is stateful and persistent. It remembers your work, connects to your tools, and manages the AI's behaviour over time.

The difference is architectural. And it is the reason AI went from "interesting toy" to "useful colleague" in the space of a few months.

From Chatting to Doing

For years, we interacted with AI without a Harness. You typed a prompt, the AI predicted the next word, and it produced a text response. Useful, but limited. (Think of it as having a very fast intern who can only communicate by passing notes under a door.)

A modern Harness changes the equation in three important ways.

First, it gives the AI memory. Instead of starting from scratch every time you open a new window, a Harness remembers your projects, your preferences, and the context of your work across weeks and months. When the AI's working memory fills up - which happens faster than you might expect, even with context windows of 150,000 words or more - a well-built Harness will pause, take structured notes about where it was, clear its memory, and pick up again from the notes.

Ethan Mollick, a professor at Wharton who has been writing about AI tools extensively, compared this to the protagonist in the film Memento - waking up with no memory but reading the notes tattooed on his body to figure out what he was doing. It is an imperfect workaround - subtle context gets lost in the summarising, which is exactly why human oversight still matters - but it means an AI can now sustain work across hours rather than minutes.

Second, it gives the AI hands. A raw AI model can write a line of code or draft an email. A Harness gives it the tools to actually open your codebase, test the code, open your email client, and send the message. This is where the Model Context Protocol (MCP) comes in - an open standard, originally created by Anthropic and now adopted across the industry, that gives AI a universal way to connect to external tools and data sources. Think of it as a common plug that lets the AI talk to your database, your file system, your browser, and your business applications through a single standard interface rather than needing a custom connection for each one.

Third, it acts as a manager. Left unsupervised, AI will hallucinate. A well-built Harness forces the AI to pause, check its work, verify sources against live information, and ask for your approval before doing anything irreversible.

This is important because without that management layer, you are essentially giving a very confident, very fast worker the keys to your office and hoping for the best.

What This Looks Like in Practice

The theory is interesting, but the practice is what matters. And there is one example that illustrates the Harness concept better than any diagram I could draw.

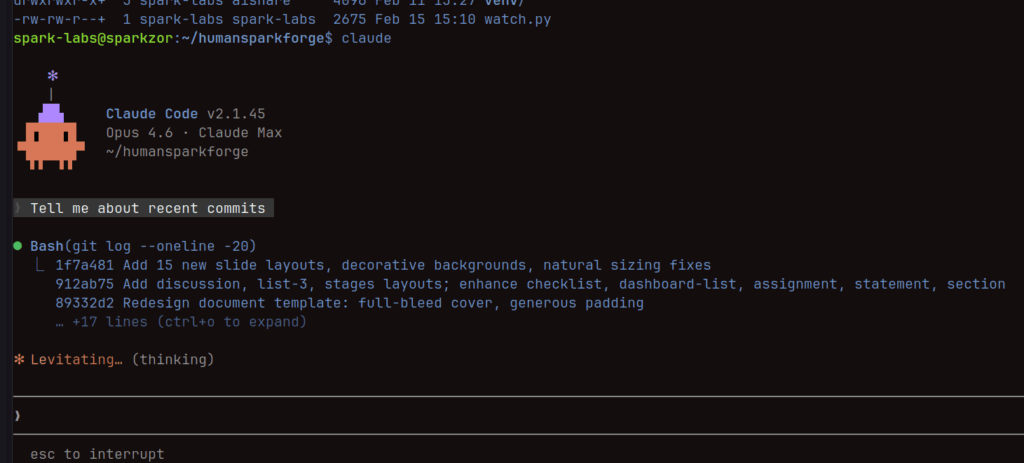

Mollick recently ran an experiment with Claude Code - a developer-focused AI tool. He gave it a single instruction: come up with a startup idea that will earn $1,000 a month, then build it. No coding knowledge required on his part.

The AI asked three clarifying questions, decided on a product (sets of 500 prompts for professional users at $39 each), and then worked independently for an hour and fourteen minutes. It created hundreds of code files, built a complete website, set up a working payment system, and deployed the whole thing. Mollick was left with a functioning online business - complete with some questionable marketing claims that the AI generated on its own initiative. (I should note: the marketing copy was dreadful. The AI may be able to build a business, but it still writes like a LinkedIn carousel.)

This is a Harness at work. The AI was not just generating text in a chat window. It was planning, building, testing, and deploying across multiple tools and systems, sustaining its effort over more than an hour, and producing a tangible output. Same engine that powers a basic chatbot conversation. Completely different outcome, because the infrastructure around it was designed to let it do real work.

Now, the previous generation of Harnesses was heavily oriented towards developers. The Claude Code interfaces look like something from a 1980s terminal:

As a recovering software engineer, I find them great - but I would not hand Claude Code to most of the business leaders I work with.

The underlying capability, though - sustained, multi-step, tool-using work - is not limited to code. It applies to research, analysis, document creation, data processing, and any workflow where you currently spend time orchestrating information across multiple systems. The developer-friendly tools are just where this technology landed first.

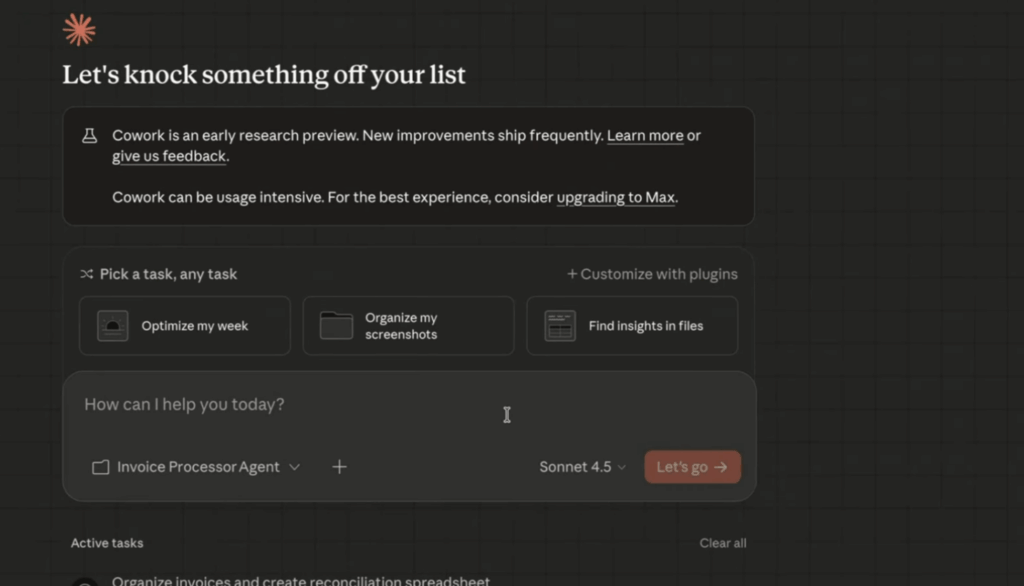

Now we have Claude Co-work, and it's a different story. Same powerful tool as Claude Code, but a user-friendly interface.

I have been testing several of these harnesses over the past few months, both in my own work and with a handful of clients. The difference between giving someone access to a basic AI chat and giving them a properly configured Harness is stark. One client described it as the difference between having a colleague who answers emails and a colleague who actually does the project. That is the gap we are talking about.

Why Business Leaders Should Pay Attention Now

If this were purely a technology story, you could afford to wait. But the Harness is already reshaping business models in ways that affect anyone running a company or selling professional services.

In February 2026, a wave of software earnings reports triggered what analysts are calling the "SaaSpocalypse" - a significant sell-off in software stocks driven by a single realisation: AI agents do not need software seats.

For twenty years, companies like Salesforce and ServiceNow built their revenue models on a simple equation - more employees means more licences. But when an AI agent can handle Level 1 customer support or qualify sales leads, it does not need a login, a dashboard, or a per-seat subscription.

The immediate effect has been a scramble to rethink pricing. Salesforce is experimenting with charging roughly $2 per successful conversation handled by an agent - taxing the outcome rather than the user. HubSpot is moving to credit-based consumption models. The shift from "how many people use the tool" to "how much work gets done" is fundamental, and it changes the cost structure of every business that relies on software to operate.

For business owners, the practical question is not whether this shift is happening but how quickly it reaches your industry. If you are paying per-seat licences for tools where AI agents could handle a meaningful portion of the work, that cost structure is going to look increasingly difficult to justify. And if you are selling software on a per-seat model, the conversation with your customers is about to change.

The Risks Nobody Is Talking About Enough

I would not be doing my job - and I certainly would not be living up to "Human-First AI" - if I presented the Harness as purely good news.

There is a difference between being an early adopter and being a crash test dummy. I am firmly in the first camp. I love playing with new tech, and I have been testing these tools extensively. But I would not touch some of them right now without serious precautions, and I have been saying so publicly for some time.

Let's be honest: the speed of adoption is running well ahead of the security infrastructure needed to support it.

And we have already seen what happens when that gap gets too wide.

Take OpenClaw - the open-source AI agent platform that has gone through multiple name changes in its short life (it was briefly Clawdbot, then Moltbot, then OpenClaw). The capabilities are genuinely amazing. But the project has been built rapidly, with shifting codebases and rebranding along the way, and that chaos has created openings that scammers have rushed to exploit.

In early 2026, a large-scale supply-chain attack called ClawHavoc hit the OpenClaw ecosystem. Attackers registered as marketplace developers on ClawHub and began mass-uploading trojanised "skills" - the plug-in modules that give AI agents new capabilities - disguised as crypto trading bots, productivity tools, and social media utilities. By the time security researchers had catalogued the damage, they had identified 1,184 malicious packages linked to 12 publisher accounts, with a single uploader responsible for 677 of them.

When users installed these skills, the AI agents executed the hidden code with whatever system permissions they had been granted. Because many users had given their agents broad access to "fix things" on their machines, the malware inherited those privileges. It exfiltrated cryptocurrency wallets, SSH keys, and - critically - the agents' own memory files, which contained API keys and sensitive business context.

But ClawHavoc was only the most dramatic incident. The everyday risks are just as concerning, and I have been watching them pile up.

When OpenClaw renamed their social accounts, scammers grabbed the abandoned usernames on Twitter and GitHub almost instantly. That means you can click a link that looks official - from a social account with thousands of followers - thinking you are downloading a legitimate update, and instead you are downloading malware. The branding looks right. It is an easy mistake to make.

Bad actors are posting modified copies of the software that look 99% correct. The difference is a tiny change hidden inside that extracts the API keys linked to your payment methods. Your budget can drain before the installation even finishes.

People are sharing plugins in forums and comments to help with the complex setup, but a compromised plugin could hijack your active session. Suddenly someone else is you - messaging your clients or family asking for emergency money. Two-factor authentication will not help, because they are already logged in as you.

And then there are the helpful strangers offering "Too hard to install? Give us your API key and we'll run it for you for $5 a month." You are handing a stranger your credentials. They could use your account to run their own operations on your bill.

The lesson here is not that AI agents are inherently dangerous. It is that the mental model most people bring to them is wrong. We treat a "skill" download like opening a document, when it is functionally closer to installing software. We grant agents broad permissions because restricting them feels like it defeats the purpose. And we store sensitive context in the agent's memory because that is what makes it useful.

This is where it gets personal for me.

I have spent the last year telling business leaders that AI can genuinely transform how they work, and I believe that. But I have also built my entire practice around the principle that the human stays in the loop. The human makes the decisions. The human sets the boundaries. The Harness makes AI enormously more capable, but it also makes the consequences of errors enormously more significant. A hallucination in a chat window is embarrassing. A hallucination that triggers an action - sending an email, deleting a file, executing a financial transaction - is a business problem.

So here is my practical advice: if tools like OpenClaw have been on your radar, let the dust settle for a couple of months or so. It will still be there. Do not try to figure it out over a weekend. And if you hire someone to set it up for you, make sure it is someone you would trust with your banking passwords - because functionally, that is what you are doing.

If you want AI automation now, start with established, well-tested, boring tools. The Harness is the future. But the organisations that will use it well are the ones that design clear boundaries around what the AI can do autonomously and what requires human approval.

What This Means for You

The reason I think 2026 is "The Year of the Harness" is that we have finally moved past obsessing over the engine, we have started building the transmission.

For anyone using AI professionally, this shifts the skill that matters. The valuable capability is no longer knowing the right prompt to make an AI sound clever. It is knowing how to choose the right Harness, connect it to your work safely, and manage the AI as it operates.

This is something I talk about as the shift from Operator to Orchestrator. When AI was just a chat box, you operated it - you typed, it responded, you typed again.

That is what I call Butler Mode: you give instructions, the AI executes. With a Harness in place, you move into Orchestrator territory. You set the objective, define the boundaries, review the output, and make the decisions that matter.

And increasingly for me, the real value comes from what I call Advisor Mode - using the AI not just to execute tasks but to think through problems with you, challenge your assumptions, and surface options you had not considered. This is what the Harness makes possible: because the AI has persistent memory of your business context, your preferences, and your past decisions, it stops acting like a task-oriented Butler and starts acting like a strategic Advisor.

Andrej Karpathy, one of the most respected AI researchers and engineers in the field, recently captured the mood among developers:

"I've never felt this much behind as a programmer. The profession is being dramatically refactored as the bits contributed by the programmer are increasingly sparse and between."

He goes on to describe the sheer volume of new concepts - agents, subagents, prompts, memory, tools, plugins, skills, MCP, workflows - that have appeared in the space of a year, and concludes that failing to adopt what is now available "feels decidedly like skill issue."

That sentiment is going to spread well beyond programming. When the Harness matures for knowledge work more broadly - and it will, quickly - the same pressure will apply to analysts, consultants, marketers, and anyone else whose work involves orchestrating information.

The good news is that the skill you need is not technical. It is managerial.

You do not need to understand how the transmission works to drive the car.

You need to know where you are going, what the road conditions are like, and when to take the wheel yourself.

We spent three years perfecting the engine. Now we are finally getting the rest of the car around it.

So here is the question I would put to you: are you still comparing engines, or have you started thinking about the Harness? Because in 2026, that is where the real advantage is going to come from.